Generative AI has been at a fever pitch since last summer, the industry is undeniably home to the most enthralling technical developments on the scene with no indication that will change for the remainder of 2023.

While image creation has grabbed a lot of attention, headlines have been dominated by OpenAI’s ChatGPT since it released. Of course, there’s an account with my name on it and I have kicked the tires a few times. But as with any platform online, I’m reluctant to pour myself into these repositories that exist on servers out of my control.

This issue is why in the past I have crowed loudly about offerings like Stable Diffusion and buttoned-up pieces of software like Diffusion Bee as well as Draw Things. These same concerns are why I am now paying attention to recent open source large language models (LLM).

Obviously, I’ve enjoyed playing around with the image generators over the last year but getting access to editorial creation without the need to type all my thoughts into a browser or making a large expenditure for GPUs has been on my mind for a while.

Compressing these massive data sets down to sizes that can be distributed across the Web and run on much lighter machinery is no small feat. Luckily, there has been a team willing to put into the compute.

I recently downloaded an offering that puts small models into a stand alone piece of software. Nomic’s GPT4All runs editorial generative models locally on your machine. They offer the option to feed data back to their servers if you choose to improve the work they are doing but it’s not a requirement. Best of all, the software is available for MacOS, Linux, and Windows. Try it for yourself.

After the initial download and installation of the Groovy model, I decided that we’re going to learn together. Of course, I pointed it at one of my favorite topics the 1893 Chicago World’s Fair. The first prompt was a little rough but so were the first images I made with Midjourney last summer.

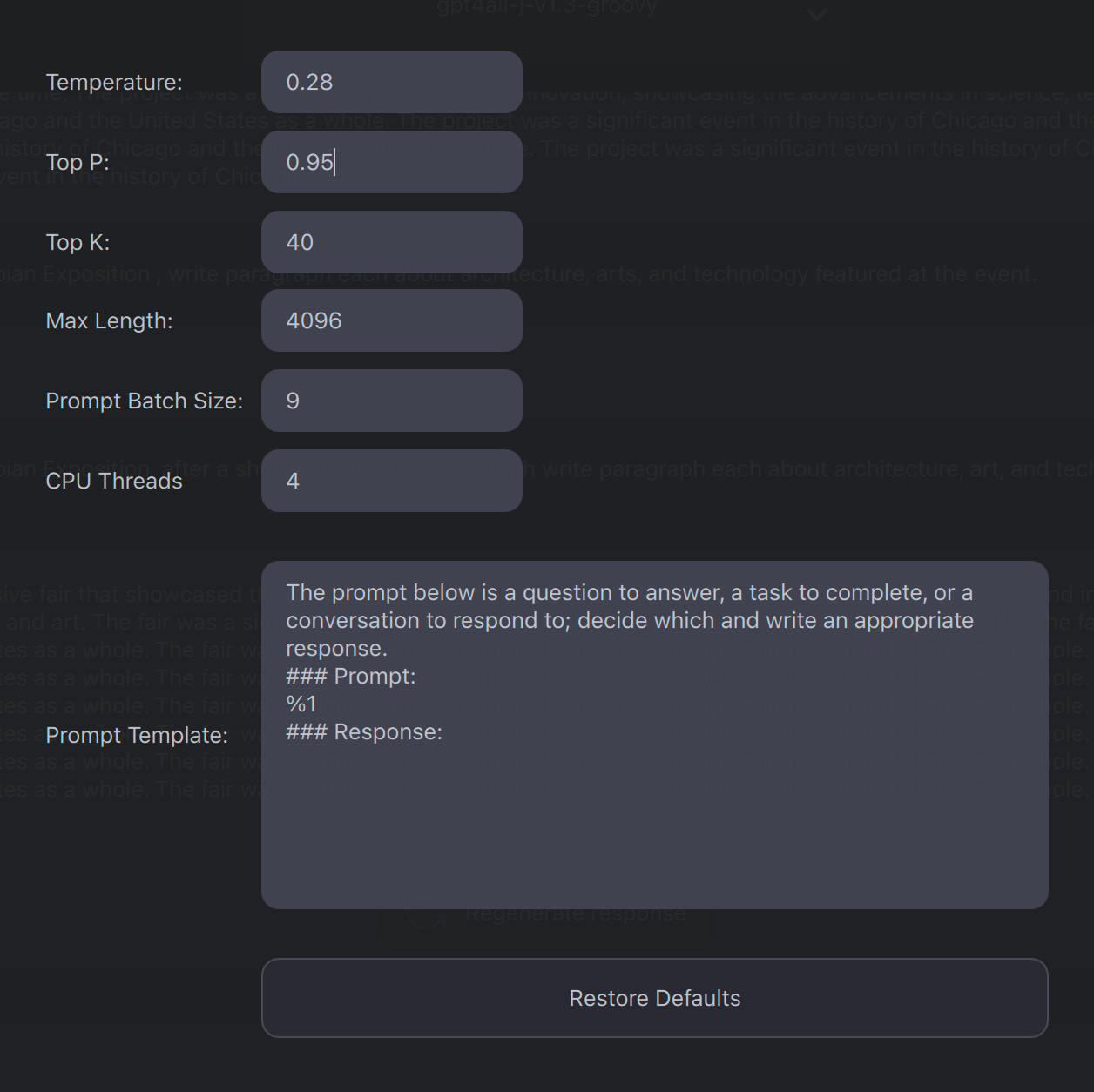

One of my favorite aspects of Stable Diffusion is the ability to adjust parameters and iterate. Those same options exist in GPT4All. You can tweak the settings, though it's going to take some experimentation to get more insightful results but I know it is possible. My first prompt resulted in repetitive loops.

Later, I downloaded the Vicuna 13B parameter model and with a more deeply constructed prompt was able to get it to compose a short essay that doesn't stumble on itself in the same ways.

It is generally correct, though perhaps not as refined as I would be capable of writing on the topic. My expectations were low and I can honestly say it exceeded them. While I’m not impressed by its knowledge, my prompt wasn’t exhaustive either.

Some people are captivated by telescopes and their ability to peer out into the void, I like microscopes - to point the magnification at a subject within immediate reach. So I turned the software on itself and posed questions for it to answer by first requesting that the engine tell me about the company responsible for producing GPT4All. The following prompts were created using the gpt4all-l13b-snoozy model, which is open source and allows outputs to be used for commercial purposes. The factual accuracy of these outputs is unconfirmed.

Prompt: Hello, please write a paragraph about the company Nomic AI which includes when it was founded, where it is based, and who runs the company

Snoozy: Nomic AI is a company that was founded in 2020. It is based in New York City and is run by a team of experienced professionals in the field of artificial intelligence. The company's mission is to revolutionize the way businesses operate by providing cutting-edge AI solutions that can help them make better decisions, optimize their operations, and improve their overall performance. Nomic AI's team of experts has a deep understanding of the latest advancements in AI and is committed to helping businesses stay ahead of the curve in this rapidly evolving field.

Next, I asked it to tell me about the software itself for a bit of reflection, if that is the right term for this context.

Prompt: Hello, please write a paragraph about GPT4All which includes who created it, what it does and how it does what it does

Snoozy: GPT4All is an AI-powered chatbot that was created by a team of experienced developers. It is designed to assist users with a wide range of tasks, from answering questions to providing recommendations and even generating new ideas. GPT4All uses a cutting-edge AI model called GPT-4, which is one of the most advanced language models available today. This model allows GPT4All to understand and respond to complex questions and requests, making it a valuable tool for businesses and individuals alike. GPT4All is able to perform these tasks by analyzing the user's input and using its advanced AI capabilities to generate relevant and useful responses. Overall, GPT4All is a powerful tool that can help users save time and get the information they need quickly and easily.

Then, I asked the model to tell me about itself which I think of as a deeper sense of introspection.

Prompt: Hello, please write a paragraph about the gpt4all-l13b-snoozy 13B model which includes who created it, what it does and how it does what it does

Snoozy: The gpt4all-l13b-snoozy 13B model is a powerful AI model that was created by a team of experienced developers. It is designed to assist users with a wide range of tasks, from answering questions to providing recommendations and even generating new ideas. The gpt4all-l13b-snoozy 13B model uses a cutting-edge AI model called GPT-4, which is one of the most advanced language models available today. This model allows the gpt4all-l13b-snoozy 13B model to understand and respond to complex questions and requests, making it a valuable tool for businesses and individuals alike. The gpt4all-l13b-snoozy 13B model is able to perform these tasks by analyzing the user's input and using its advanced AI capabilities to generate relevant and useful responses. Overall, the gpt4all-l13b-snoozy 13B model is a powerful tool that can help users save time and get the information they need quickly and easily.

Like most projects, Nomic operates a Discord server for their burgeoning community, so I joined it. The team is very responsive to engagement so I reached out to one of the cofounders for a quote to include in this article about where the company came from and what they intend to do next.

“Large language models are the foundational technology that the next generation of software will be built on. A call to an LLM in the 2020's will be as prevalent as a Linux kernel system call. There are currently 3 organizations that control access to this fundamental technology. The goal of GPT4All is to put a powerful and useful LLM onto every desktop and server in the world, effectively decentralizing and democratizing access.

We're releasing an upgraded desktop interface that's runs an LLM on any computer this week at gpt4all.io, a Discord bot which anyone can use from their phones, as well as an API that replicates the OpenAI API but you can host locally and a model that is significantly better than every other open source model in existence.”

~ Andriy Mulyar - Cofounder of Nomic AI

Plenty has been said about OpenAI’s ChatGPT and editorial inference engines in general, and they are a revolution in generative creation. This article has attempted to look not so much at the external waves of change as much as how we are indicated to change from it, like peering into a crystal ball to which we share a neuronal link. Perhaps that is really the only kind, a Magic 8 Ball revealing which side of the coin flip we have wanted but with the capacity to distill that down from the social level to the personal because these models are trained on data produced by our civilizations.

While I’m on the metalanguage topic, I’m not onboard with this term “hallucinations” because it’s denotationally poor in this application. I think the models are doing incredibly well in regards to how intelligent they are, considering the quality of the meta/data we have trained them on, which is broad. Additionally, I’m not certain we’ll ever develop models or engines that will satisfy everyone because one person’s bias is another’s “hallucination” is another one’s objective fact.

One way to increase the clarity of the output from these engines is to improve the quality of the input prompt, in some cases to even create an additional layer of contextualization overtop of the main model.

Prompt design is like programming a model with a set of instructions that gently guide it towards more desirable behavior. Large language models (LLMs) are built on massive datasets, learning new concepts by ingesting more data. They're like predictive text on steroids, parroting what they've learned with a little "chaos" programmed in to generate novel sequences of text. While not perfect, they're still a useful, stochastic parrot.

However, without specific instructions or context, LLMs will only provide general, broad outputs. It's a case of a broad ask yielding a broad response. For the most desirable outputs from the model, it's useful to provide large "One-shot" prompts with clear instructions. Including templates or examples in the initial prompt can communicate patterns and nuances for the model to follow.

Think of LLMs as a giant puzzle: give it the right pieces, and it can produce an incredible image. But if the pieces are missing or jumbled, the resulting image will be unclear and incomplete. Effective prompt design ensures that the model has all the necessary pieces to produce the best possible output.

~ Alex Patin, Designer & Technologist

Super-prompts can enabled dynamic and in-depth connections that allow more deeply integrated outputs over the course of a conversation with chat bot. Alex was one of the first people to show me how to dig deeper into generative AI and has been refining a collection of super-prompts which you can use for yourself.

Additionally, I am keeping an eye out on a few other developments in this space and may report on them as they emerge. In the meantime, take advantage of what is available today because you can never be sure what will happen on the bleeding edge.

I’m hosting a Twitter Space to discuss the topic and this article later this afternoon - Tuesday May 2nd at 4pm CST.