Artificially Yet Intelligently Bringing the Past to Life

Putting color and imagination into Chicago 1893 photography, new and old

Enter the World’s Fair

If we have never crossed paths before, this is our chance to get acquainted and cover one of my favorite topics: the Columbian Exposition. Luckily, there’s no shortage of angles on the event. Ever since I first laid my hands on “The Dream City”, a collection of official fair photographs taken by Charles Arnold in Jackson Park during the event, I’ve been pretty obsessed with the event.

What started as a simple Twitter thread to share some of those photos has grown into a book, documentary, and branded online presence on Facebook as well as Twitter.

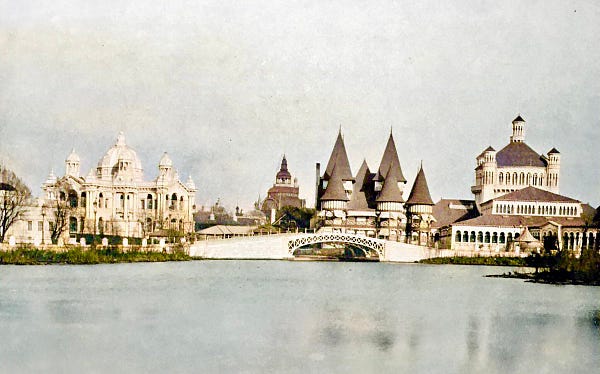

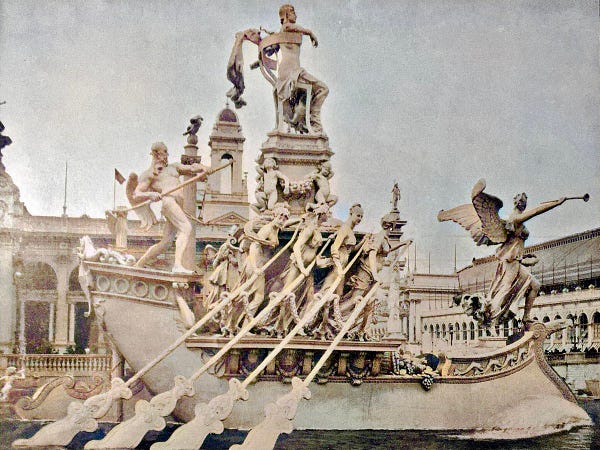

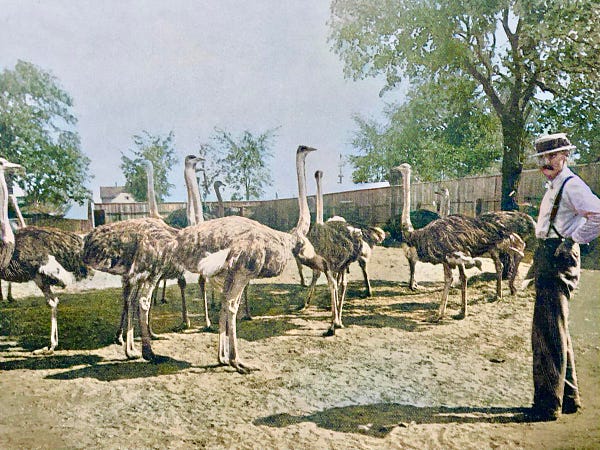

As we get closer to releasing the next phase of the Chicago 1893 media project, I thought it would be fun to offer up a collection algorithmically colorized photos—some of which have been hanging around since 2021.

Perhaps what makes colored versions of photos that were originally black and white or sepia toned so captivating is that it shortens the distance between us and the time when the pictures were taken.

It is fair to say the original photos are difficult to interpret at times because of their monochromatic nature but once you put some color into these guards’ faces or blue into the waves, the subjects become immediate ly alive again, almost moving. I still find new things in images while reviewing them.

There’s an added sharpness and depth to the photos once color comes into play. It’s as if the material textures and environmental elements finally communicate that they were a part of a three dimensional world, as we live in the moment. The color processing that occurred on the picture of the Japanese village is amongst my favorite of the nearly two dozen images I ran through the algorithm.

These last few colorizations have a few interesting results, I think. This collection of photos were originally made available in the Twitter Community.

Leaving It to the Algorithm

I tested at least eight online recoloring algorithms with this picture. These are the three most distinct renders, as many recreated seemingly duplicates or were of lower quality in one way or another.

Not all algorithmic coloring is the same, however, some are exactly the same or at least quite similar.

The full depth of colors are not recreated in these renditions, nor are they rendered with exact fidelity (as you can see). Additional saturation and processing was done on my iPhone. Not every image pops from manipulation of this kind, but my opinion is that a little added saturation doesn’t hurt.

These three pictures illustrate a nice cross section of the experiences available on the grounds that year.

I particularly like the doctored expressions on the men’s faces, likely because they moved during exposure.

Modern Eyes

What is valuable is the capacity to enliven these images beyond the black and white and sepia tones of the original captures, to let our modern eyes overwhelmed by blue light and neon know that the past was not a monotonous or washed out time—to understand that these scenes were as incredible then as the wonders we manufacture today.

The colorizations have a few interesting results, I think. Do you have any favorite pictures from this series? If you’d like to colorize photos, I recommend Image Colorizer for its nuance and the final resolution of the images it turns out.

Historical Inhuman Elements

Inspiration is critical and perhaps can seem like it’s in short supply at times. Looking ahead with a spark in your eye makes each new day brighter. What would you do to capture that feeling for a while? The two topics that have instilled that feeling in me lately are Extended Reality and AI, which I defined broadly in my last article.

Since last summer, image generation by AI platforms like Midjourney and Stable Diffusion have taken over the social networks while sparking a debate about creativity as well as litigation. I’m on both sides of that argument, I don’t think the models should train on currently copyright protected media but I also believe they should be available to everyone.

There is certainly value to these engines but I think they can do a better job of reporting what is occurring under the hood. Personally, I choose to utilize styles from deceased artists, but I wasn’t doing that initially. The creations that were generated here for Chicago 1893 likely include stylistic references to artists still making their way.

Obviously, I’m not trying to monetize these works so I think it’s generally alright but I’d like to avoid infringing on the lifeblood of other artists. That’s an integrity issue.

The earliest trial images were with DALLE but I found its results were too fantastic and the platform too restrictive due to the dimensions and prompt methodology. Also I just wasn’t very good at that time. I’ve created over 100 images in the platform and haven’t loved the results, which is too abstract at times for my preferences.

Later in the summer I experimented with Midjourney after gaining access to their Discord server. They highly limit the number of outputs for their free tier but I was able to use it a few times to iterate on prompts. What it did successfully combine, in my opinion, was the aesthetics of oil paintings done at the event as well as the photos that Charles Arnold captured on the grounds.

Some months later, I spent time with a friend that had gone much further down the rabbit hole in terms of developing an understanding of the process and connecting the dots to getting reliable outputs. He was running a variant of Stable Diffusion locally on his desktop computer. It was really fun to push rolls and just give it nonsense prompts as well to laugh.

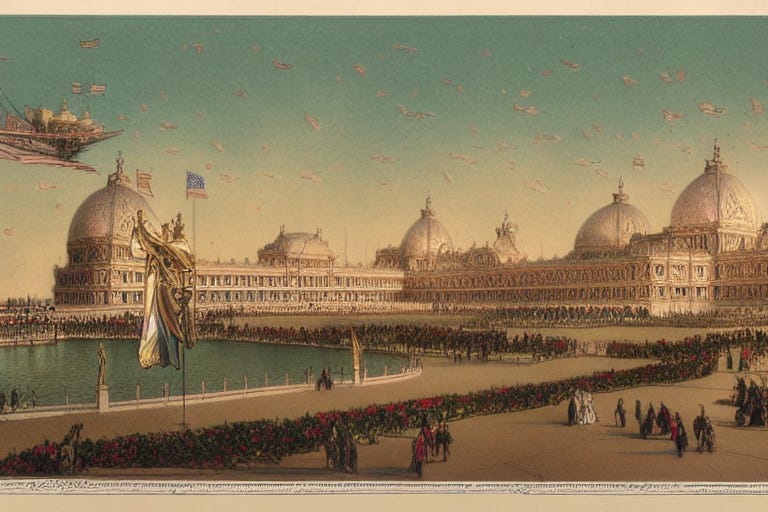

Late last year my computer breathed its final compute, I replaced it with a new MacBook running an M1 which opened up the option to start processing images locally on my own machine. You may have noticed that the images created with AI generators have common elements, that is because I have been using a similar prompt to compare outputs: “Grand Basin of the Columbian Exposition with airships in the sky”. This places a few well referenced elements relevant to the event, particularly the Court of Honor but also adds a fantastic component to the imagery that calls back to scenes from a book by Thomas Pynchon called “Against the Day”.

Recently, I started creating panoramas with perspective view then importing them into Gravity Sketch on the Meta Quest 2. It’s possible to warp the images, bending them in three dimensional space to create a sense of depth.

More to come for Chicago 1893 this year, can’t wait to show you what else we have been working on…